Google’s TurboQuant: A Major Breakthrough in AI Memory

Last week, Google unveiled TurboQuant, a groundbreaking approach to tackle the AI memory problem. Instead of simply increasing memory capacity, it focuses on reducing the amount needed. This shift could significantly change how AI models operate.

TurboQuant aims to compress information represented as vectors in high-dimensional spaces. This is similar to what fictional company Pied Piper achieved in the TV show Silicon Valley with their compression algorithm.

Understanding TurboQuant

TurboQuant consists of two main stages: PolarQuant and QJL (Quantised Johnson-Lindenstrauss). PolarQuant compresses vectors by converting them from Cartesian coordinates to polar coordinates. This method takes advantage of predictable angle distributions in high-dimensional spaces.

The second stage, QJL, corrects errors that arise during quantization. It uses a random projection technique to maintain accuracy while minimizing additional memory usage. Together, these stages can achieve a remarkable reduction in memory size without sacrificing performance.

Why It Matters

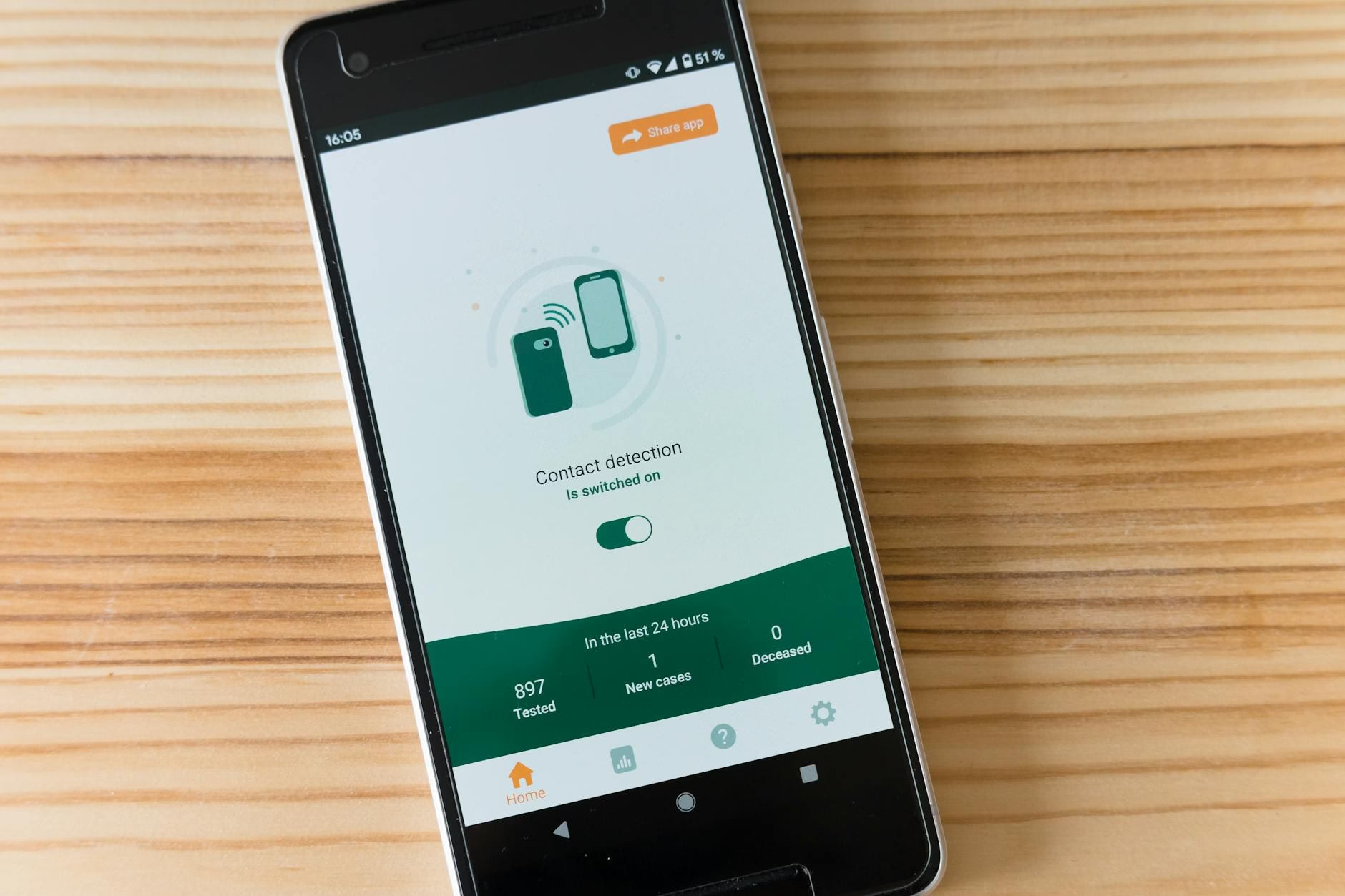

The implications of TurboQuant are significant for businesses relying on large language models (LLMs). By reducing the KV cache size by six times, companies can serve more users simultaneously or support lon

ger contexts without needing additional resources.

Key takeaways

- TurboQuant reduces AI memory requirements by up to six times.

- This technology allows for improved performance in LLMs without losing accuracy.

- Businesses can serve more users simultaneously with reduced costs.

- The algorithm is deployable across various models without specific training.

In summary, Google’s TurboQuant represents a major leap forward in managing AI memory challenges. Companies should explore how this technology can benefit their operations and enhance their capabilities. For example, adopting TurboQuant could enable businesses to run larger models on existing hardware without additional investments.

FAQ

- What is TurboQuant? It’s a new algorithm from Google designed to reduce memory requirements for AI models significantly.

- How does it work? It uses two stages—PolarQuant for compression and QJL for error correction—to optimize vector storage efficiently.

- What are the benefits? Businesses can expect lower operational costs and improved model performance while serving more users simultaneously.

Sources

For the original report, see the source article.