Imagine if you could have two AI systems, Claude and Codex, working together as pair programmers. They would communicate directly, with one acting as the main worker and the other as a reviewer. This concept is not just a fantasy; researchers at Cursor are making it a reality.

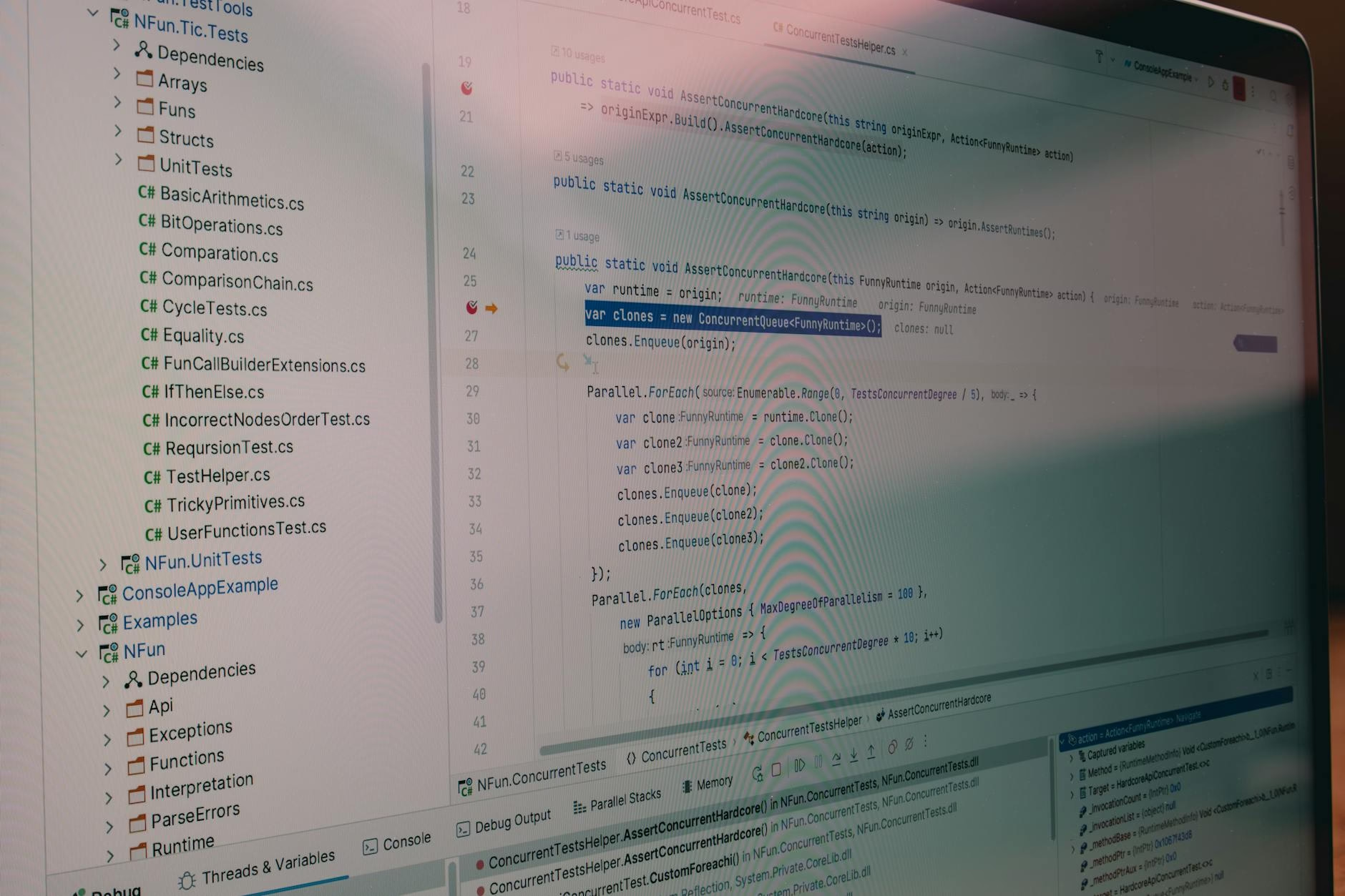

Cursor’s research into long-running coding agents led to the development of a multi-agent workflow. This system resembles how human teams operate, where a main orchestrator assigns tasks to various workers. The features of Claude Code “Agent teams” and Codex “Multi-agent” function similarly, allowing subagents to report back to the main agent.

Enhancing Collaboration

The idea of mimicking human collaboration in programming is gaining traction. By using Claude and Codex side-by-side for code reviews, interesting dynamics emerge. They often provide different feedback, which can be beneficial. When both reviewers agree on feedback, it signals strong consensus.

This collaborative approach addresses all feedback points effectively. However, traditional code reviews can slow down the feedback loop and become noisy. To tackle this issue, a new tool called `loop` has been created.

Introducing `loop`

`loop` is a simple command-line interface that runs Claude and Codex together in tmux. It allows them to communicate seamlessly while reviewing code. This setup speeds up the feedback process while maintaining context across iterations.

The interaction between these agents feels more natural, making them proactive in their responses. As these models improve, their collaborat

ive capabilities are expected to enhance further.

Future Considerations

The future of agentic workflows may resemble familiar teamwork rather than automated processes. There are still open questions about optimizing human involvement in this workflow. For instance, should work be split across multiple pull requests (PRs)?

Another consideration is whether to share documents like PLAN.md in Git or include them in PR descriptions. Additionally, providing visual proof of work through screenshots or videos could enhance clarity during reviews.

Key takeaways

- Claude and Codex can collaborate like human programmers.

- The `loop` tool enhances the speed of code reviews.

- Consensus between agents signals strong feedback agreement.

- Future workflows may require better human-agent integration.

A lot of developers are now using multiple agent harnesses for various reasons. These include avoiding vendor lock-in or maximizing subscription benefits while gaining diverse perspectives from different agents. Therefore, multi-agent applications should prioritize agent-to-agent communication as an essential feature.

If you’re interested in exploring this innovative approach further, consider trying out tools like NorthNeural. They offer insights into how AI can transform workflows effectively.

FAQ

- What is `loop`? It’s a CLI tool that allows Claude and Codex to work together on code reviews.

- How does this improve coding efficiency? It speeds up feedback loops by enabling direct communication between agents.

- Why use multiple agents? Different agents provide varied perspectives and strengths during coding tasks.