Last week, Google unveiled TurboQuant, a powerful new algorithm that addresses the memory challenges faced by AI models. Instead of simply increasing memory capacity, it focuses on reducing the amount needed. This innovative approach could change how businesses manage their AI systems.

TurboQuant works by compressing information represented as vectors in high-dimensional spaces. This is similar to what was depicted in the TV show Silicon Valley, where Pied Piper created a general-purpose compression algorithm. By effectively managing data storage, Google aims to enhance the performance and efficiency of large language models (LLMs).

Understanding the Memory Problem

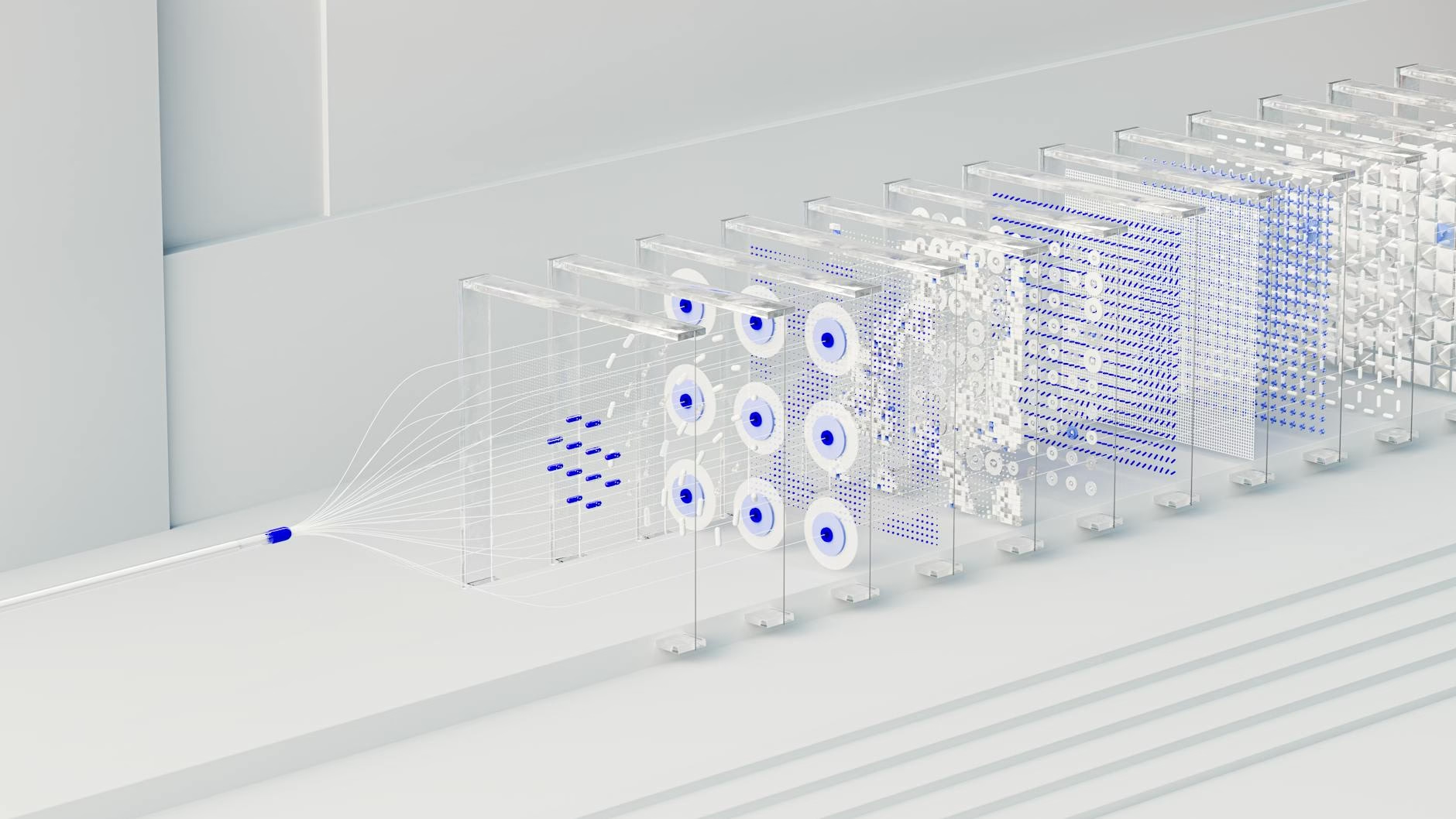

Large language models generate text one token at a time. Each new token depends on all previous tokens, which requires significant computational resources. The model uses an attention mechanism that calculates three vectors—query, key, and value—for every token.

This process can be resource-intensive because it recalculates these vectors for every token generated. As conversations or data inputs grow longer, the demand for GPU memory increases dramatically, leading to inefficiencies.

The Role of KV Cache

The key-value (KV) cache is crucial for storing these vectors efficiently. However, as more tokens are processed, the KV cache grows larger and consumes more memory than the model weights themselves. This situation creates bottlenecks when trying to serve multiple users or support longer contexts.

By implementing caching strategies, businesses can reduce computational costs but at the expense of increased memory requirements. Finding a

balance between computation and memory usage is essential for optimizing performance.

How TurboQuant Works

TurboQuant consists of two main stages: PolarQuant and QJL (Quantised Johnson-Lindenstrauss). PolarQuant converts vector representations from Cartesian to polar coordinates. This transformation allows for better compression because it takes advantage of predictable patterns in high-dimensional spaces.

The second stage, QJL, corrects any errors introduced during quantization without adding extra memory overhead. Together, these stages achieve significant reductions in KV cache size while maintaining accuracy across various models.

Impact on Businesses

The introduction of TurboQuant could lead to a sixfold reduction in KV cache size without losing accuracy. This improvement means that businesses can run more efficient AI systems with lower hardware costs.

As a result, companies might find it easier to scale their AI applications while managing expenses effectively. For instance, smaller firms could leverage this technology to compete with larger players by reducing their infrastructure costs.

Key Takeaways

- TurboQuant reduces AI memory needs by up to six times.

- The algorithm improves performance without compromising accuracy.

- Businesses can scale their AI applications more efficiently.

- This innovation may shift market dynamics in the tech industry.

FAQ

- What is TurboQuant? It’s an algorithm developed by Google that reduces memory requirements for AI models.

- How does it improve efficiency? By compressing data representations and correcting quantization errors without extra overhead.

- What industries can benefit from TurboQuant? Any industry using large language models or high-dimensional data processing can benefit significantly.

For the original report, see the source article.