Ollama has made significant strides in performance for users of Apple silicon. The latest version, powered by Apple’s MLX machine learning framework, promises faster responses and improved efficiency. This update is particularly beneficial for personal assistants like OpenClaw and coding agents such as Claude Code.

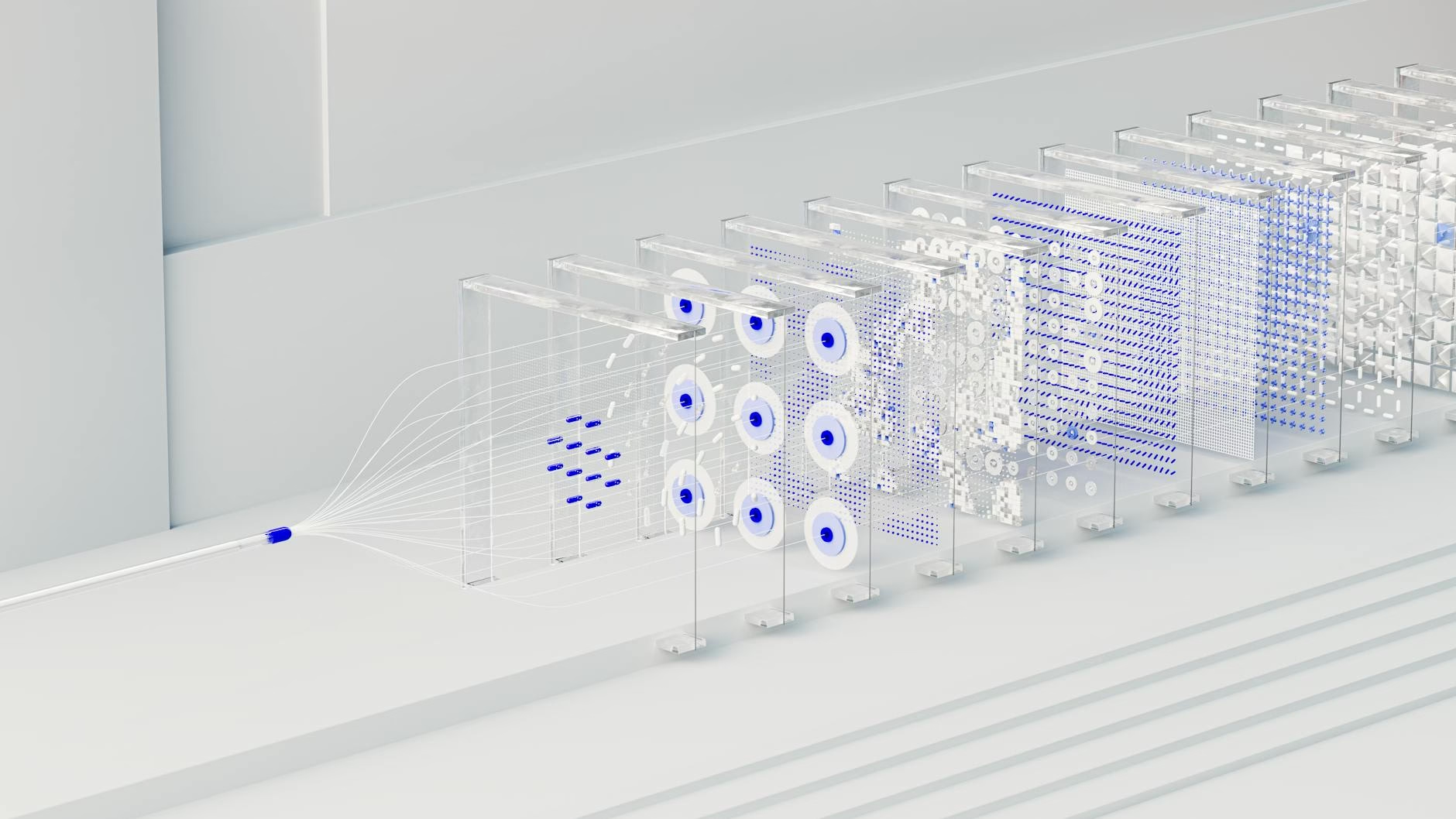

With this enhancement, Ollama can now leverage the unified memory architecture of Apple’s chips. As a result, users can expect a noticeable speed increase across all Apple silicon devices. This is especially true for those using M5, M5 Pro, and M5 Max chips.

Key takeaways

- Ollama now runs faster thanks to Apple’s MLX framework.

- Improved performance benefits personal assistants and coding agents.

- New NVFP4 support enhances response quality while saving resources.

- Upgraded caching leads to more efficient task handling.

- Users can start using the new features with Ollama 0.19.

The integration of GPU Neural Accelerators in the M5 series significantly boosts both time to first token (TTFT) and generation speed. For example, testing showed that Ollama achieved 1851 tokens per second during prefill operations. This means users will experience quicker responses when interacting with their coding agents or personal assistants.

The new NVFP4 format also plays a crucial role in maintaining model accuracy while reducing memory needs. This allows Ollama users to achieve results comparable to those in production environments. As more providers adopt this format, it will improve overall user experience and accessibility.

Enhanced Caching for

Better Performance

Another key improvement is the upgraded caching system within Ollama. The new cache design reduces memory usage by reusing data across conversations. This leads to more efficient interactions when using shared prompts with tools like Claude Code.

The intelligent checkpoints feature further enhances responsiveness by storing snapshots at strategic points in the conversation flow. Consequently, users will notice faster replies as less processing is required for each prompt.

Getting Started with Ollama 0.19

If you want to take advantage of these enhancements, download Ollama 0.19 today. Ensure your Mac has more than 32GB of unified memory for optimal performance. You can launch coding agents or personal assistants easily using simple commands:

ollama launch claude --model qwen3.5:35b-a3b-coding-nvfp4re>

ollama launch openclaw --model qwen3.5:35b-a3b-coding-nvfp4re>

Future Developments

The team behind Ollama is actively working on supporting additional models in the future. They plan to simplify importing custom models fine-tuned for supported architectures as well. Meanwhile, they will continue expanding the list of compatible architectures to enhance user experience further.

FAQ

- What is Ollama? Ollama is a platform that enhances AI interactions through coding agents and personal assistants.

- How does MLX improve performance? MLX optimizes memory usage and processing speed on Apple silicon devices.

- What are NVFP4 benefits? NVFP4 maintains model accuracy while reducing resource requirements during inference workloads.

Sources

For the original report, see the source article.